A bit of routine maintenance on the maze code for my micromouse turned into a possible real problem that has been waiting to surface for years now. In spite of running much the same flooding and path generating code since my first successful mouse many years back, I now discover it could have failed on complex mazes.

A couple of years back, I gave a talk about creating command lists to control the fast running of a micromouse. The method used a fairly large and dumb-looking state machine that could generate command lists much more efficiently than my old method. Last year, I gave a presentation about flooding the maze with values that reflected the actual cost of running along diagonals and straights. This would let the mouse make better decisions about the path it should take. For example, longer, straighter paths may be faster than shorter paths with more turns.

Getting around to change

For one reason or another, I never really got around to putting these two components together in the mouse for a contest. they had only been used in practice. After all, I had a scheme that was proving reasonably successful and a competition is no place to be testing new code.

After the Taiwan 2016 contest, I was a bit puzzled as to why Decimus 4E took the shorter path instead of using the longer, but potentially faster path. So, I decided to tidy up the code and get these things sorted out in plenty of time for Japan in a couple of months.

Simulating for success or “Fake it until you make it”

I do not have access to a full-size maze. Even if I did, I could not practically build many different test mazes to see how the revised code worked. Instead, I wrote a small program that would read a maze file from disk and generate a command list using the old method and the new method and then compare the two. My maze file collection has nearly 450 mazes so I was confident that, if both methods gave the same result, the second was going to be OK to use.

So much for the theory

Not only did the new method give some minor differences across a whole range of files, there were a small number for which neither method gave a solution. In some cases, the code crashed completely. In a contest a code failure like that would probably manifest itself as the mouse failing to complete a search since there would come a point where the solver would discover enough of the maze to generate the error. The mouse may then either cycle endlessly between states or simply halt in its tracks.

Pathological Mazes

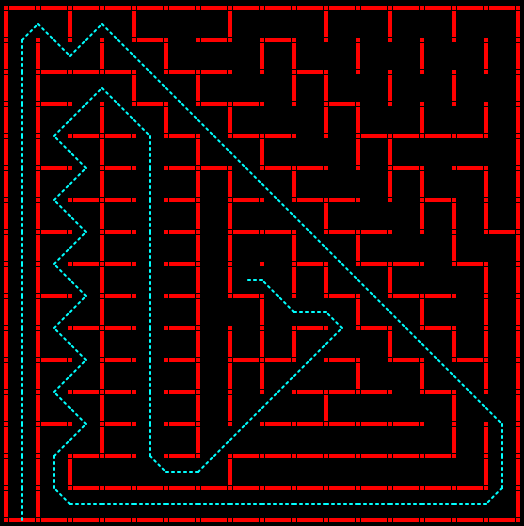

Most puzzling was the result with this test maze:

No matter what I did, I could not get a successful path with any of the old or new techniques. It is fixed now. It turned out that the maze contains a maximal length diagonal and I had miscalculated how long that could be. My code only allowed for 24 cells traversed in a diagonal so I exceeded the bounds of an array of costs used in flooding the maze. This is a classic error and one I should have been more prepared for. Worse, on the mouse I have no protection agains overrunning an array boundary and so the result would be unpredictable. On the PC, I just get a segmentation fault and an error message on the console.

Whatever Next

Now I wonder if this error may have shown up before while running the mouse. At least it will not happen again and a potential disaster has been averted. Or, of course, it is a disaster delayed because there may be any number of such bugs in the code. Even after testing against 450 mazes, how can I be sure I have a guaranteed correct solution and that there is not some other pathological maze that will kill my mouse in a contest. For that matter, how confident are you that your solution is correct?

Happy bug hunting.

Could you share your maze collection?

Sure. I will try and get something up today or tomorrow.